Specifications for digital pressure gauges can sometimes seem confusing or overwhelming, especially, if you are unfamiliar with the terminology.

Some pressure sensors will specify accuracy as a percent of full scale (FS) while others provide the specification as a percent of reading. So why are there different ways of specifying the accuracy of pressure sensors and is percent of reading more accurate than percent of full scale or vise versa? This brief technical note will discuss the two differences and answer these questions.

Percentage of Reading Accuracy

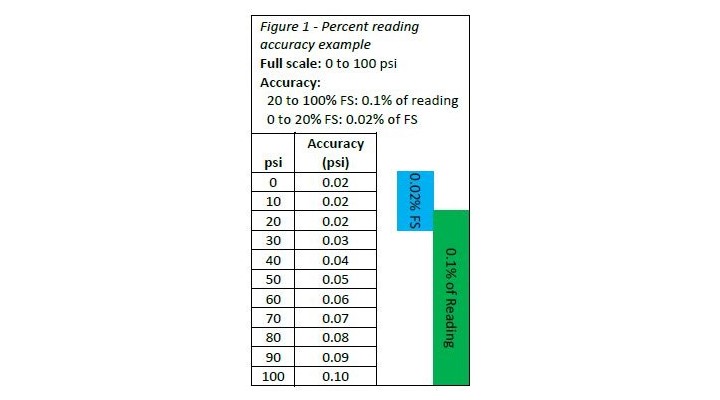

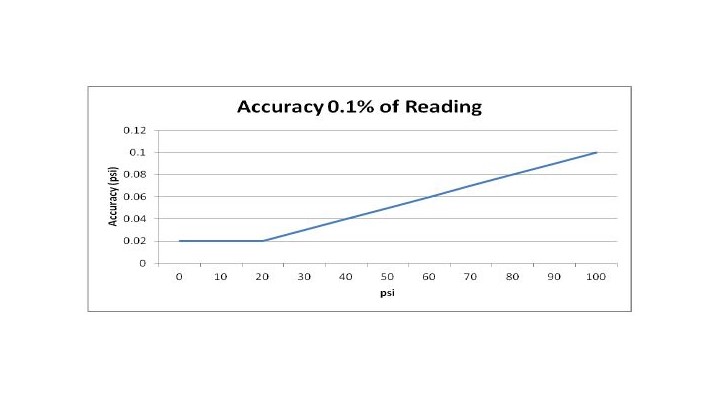

Accuracy as a percentage of reading is accomplished by multiplying the accuracy percentage by the pressure reading. Thus, the lower the pressure measurement, the better the accuracy. Instruments that have a percent reading specification are accompanied with a floor specification. The floor specification takes into account uncertainties such as resolution and measurement noise which may be negligible at higher pressures but are of much more significance at lower pressures.

For example, an accuracy specification may read 0.1% of reading for 20 to 100% of range and 0.04% of full scale below 20% of the range. The 0.04% of full scale specification is considered the floor specification. To understand the accuracy of the sensor, the user is then required to know where the floor spec is applicable and the full scale of the sensor.

This method of specification is often used because it aligns well with the typical performance of pressure gauges. Typically, the closer you measure to barometric pressure the better the performance of the gauge. Figures 1 and the graph below show an example specification for a 100 psi gauge and its accuracy in psi.

Percentage of Full Scale Accuracy

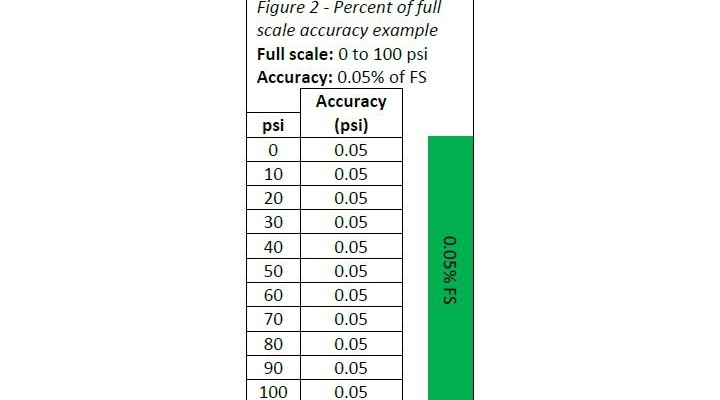

Accuracy as a percentage of full scale is calculated by multiplying the accuracy percentage by the full scale pressure of the gauge. This is obviously a more simple method of specification and is most commonly used in industry because it is easy to calculate and interpret. Denoting the accuracy as percent full scale is a more conservative way of specifying the pressure sensor because typically the sensor doesn't perform the same over its full range.

It usually will perform more accurately as you approach barometric pressure. This type of specification is most common for industrial gauges which make it easier to compare one gauge versus another. Figure 2 is an example specification for a 100 psi gauge and its accuracy in psi.

A Comparison of Percent of Full Scale and Percent of Reading Accuracies

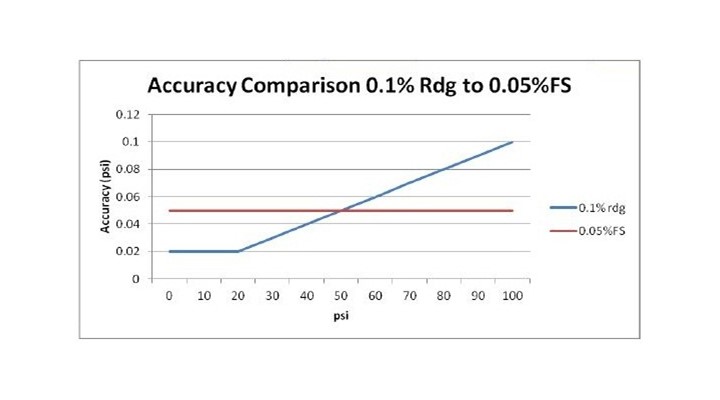

So you may ask, "Which is more accurate?" The answer is that it depends on the pressure being measured. In the two examples given, the gauge specified at 0.1% of reading is more accurate as you measure lower pressures in its range. However, as you move above 50% of the range, the gauge specified at 0.05% of full scale becomes more accurate than the 0.1% of reading gauge. This can be seen clearly in the chart (left) and graph (below) where the two gauges are compared in terms of psi accuracy.

To properly compare these, two gauges you should convert the accuracy to pressure units, such as psi or bar. Then they can be properly matched one against another in like units of measure. In conclusion, one method of specification is not better than another, it is just different. Given this difference it becomes important to know how to interpret the different specifications types and be able to compare one versus another.